|

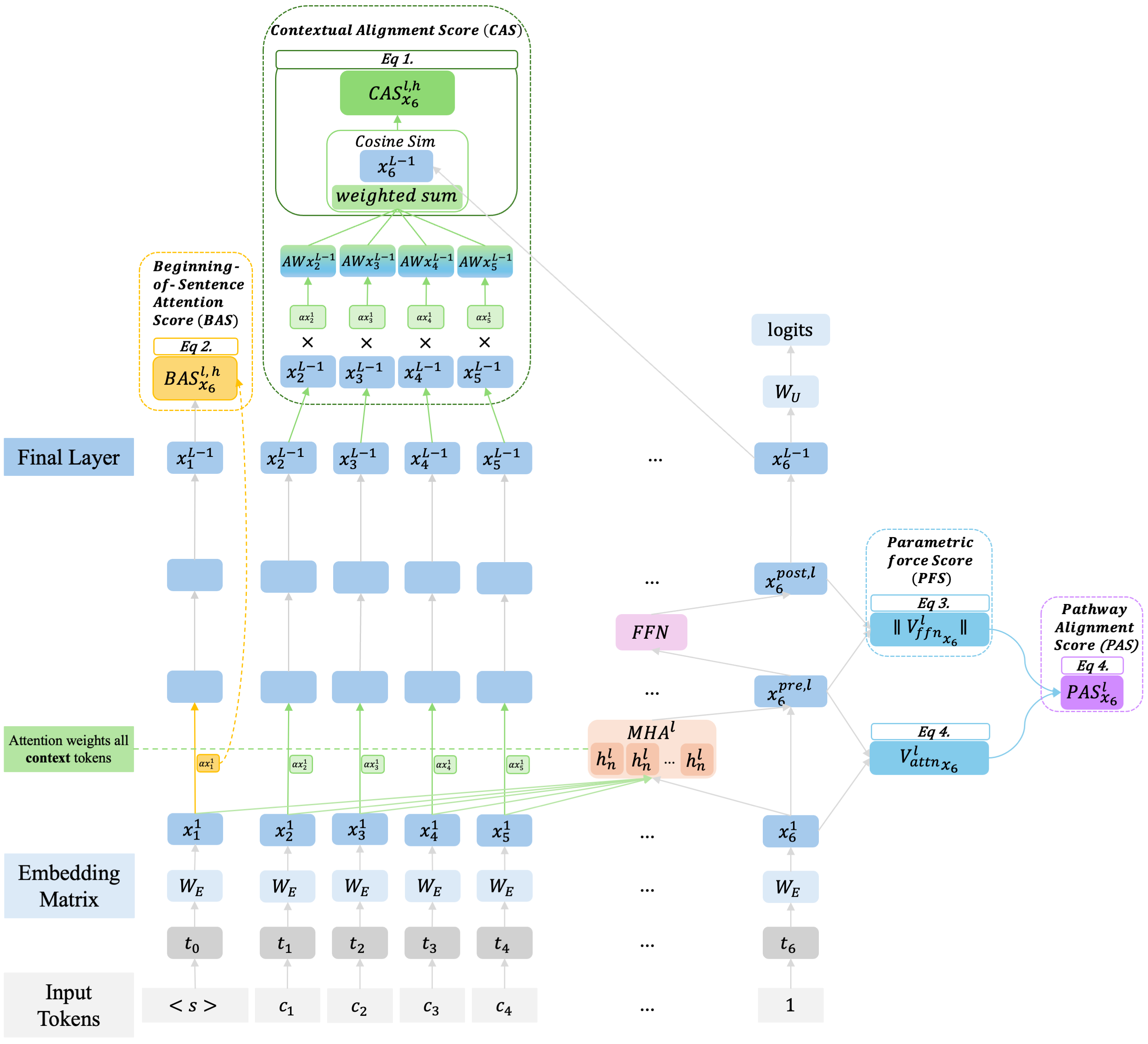

Maxime Dassen Hi, I'm Maxime (she/her). I am a PhD student in Artificial Intelligence at the IRLab of the University of Amsterdam, advised by Andrew Yates and Evangelos Kanoulas. I'm a researcher interested in how language and vision-language models coordinate what they observe with what they know; when they trust external evidence, when they shouldn't, and what their internal computation tells us about the difference. My work sits across retrieval-augmented systems, multimodal grounding, and trustworthy AI, with the goal of building models people can actually rely on. In 2025 I was a Visiting PhD Student at the Johns Hopkins University HLTCOE as part of the SCALE 2025 program, and will return for SCALE 2026 to work on multimodal RAG. |

|

Selected ResearchI'm interested in machine learning, deep learning, generative AI, and multimodal learning. Some papers are highlighted. |

|

|||

|

Template adjusted from this website. |